Learning Transferable Deep Models for Land-Use Classification with High-Resolution Remote Sensing Images

A website is available here.

Motivation

One of the motivations with the lack of well-annotated large-scale land-use dataset is the inadequate transferability of deep learning models.

Due to the diverse distributions of objects and spectral shifts caused by the different acquisition conditions of images, deep models trained on a certain set of annotated remote-sensing (RS) images may not be effective when dealing with images acquired by different sensors or from different geo-locations. In order to obtain satisfactory land-use classification on a remote-sensing image of interest, referred to as the target image, new specific annotated samples closely related to it are often necessary for model fine-tuning.

Learning transferable model for multi-source images

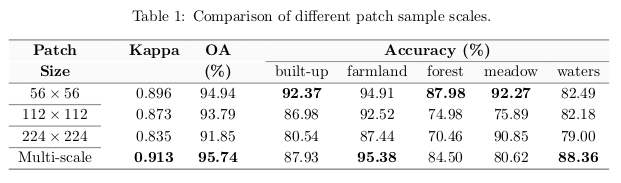

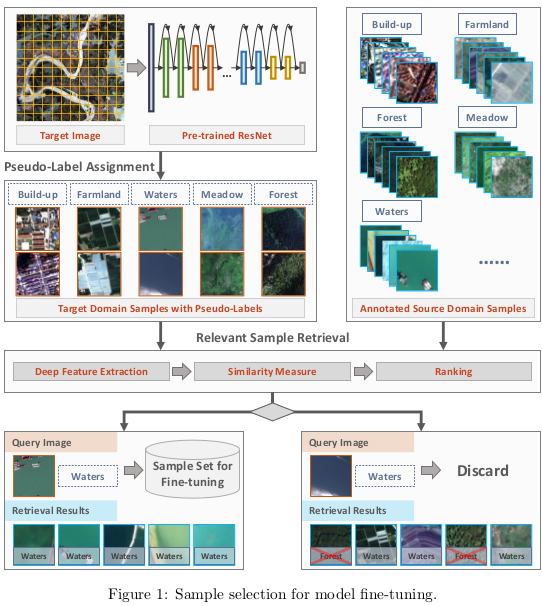

CNNs are unable to achieve satisfactory classification results on multi-source remote-sensing images because of dramatic changes in acquisition conditions. To transfer CNN models for classifying remote-sensing images acquired under different conditions, they introduce an automatic training sample acquisition scheme that requires no manual operations. As shown in Fig. 1, the proposed scheme is divided into two stages: pseudo-label assignment and relevant sample retrieval (The Euclidean distance for the similarities between the image and the source domain). Category predictions and deep features generated by CNN are used to search target samples that possess similar characteristics to the source domain. These relevant samples and their corresponding category predictions, which are referred to as pseudo-labels, are used for CNN model fine-tuning.

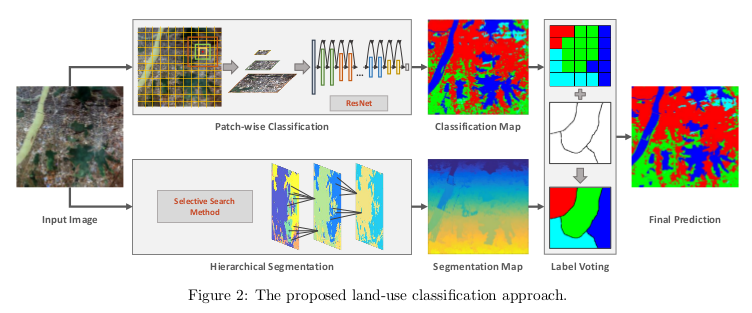

Patch-wise classification

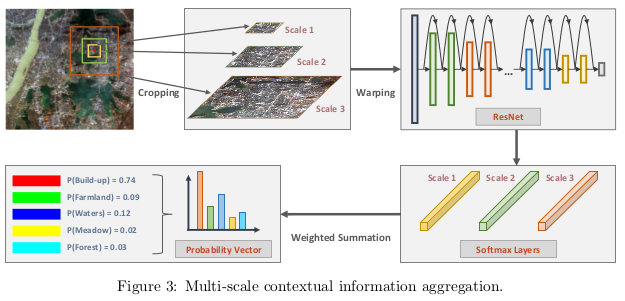

Ground objects show different characteristics in different spatial resolutions, to capture sufficient information of objects from the single-scale observation field they exploit the attributes of the objects and their spatial distributions.

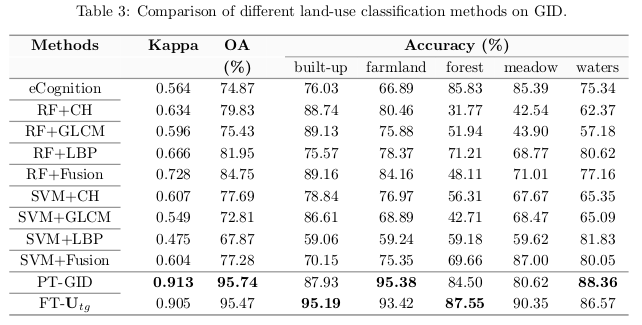

Results