Siamese LSTM-based fiber structural similarity network (FS2NET) for rotation invariant brain tractography segmentation

Motivation

- Streamline classification usually requires registration of subject data to a common space.

- Contribution: The main goal of this work is to provide a rotationally invariant approach for streamline segmentation based on a set of reference streamlines.

Main idea

- Learn the probability of two streamlines to belong to the same bundle.

- Recognise a streamline by assigning the label of the fiber with the highest similarity in a given set of reference fibers.

Rotation invariance

-

Assumption: Similarity between fibers is learned. This is supposed to generalise to

a. misaligned

b. rotated

streamlines.

-

Therefore, applying data augmentation to the reference set is sufficient for achieving rotation invariance. (I.e. augmentation independent of the training stage.)

Method

-

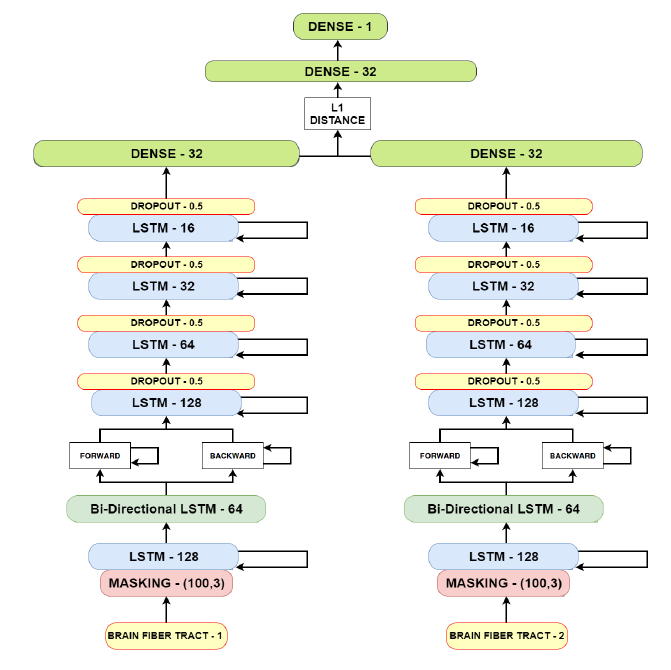

FS2Net:

- stacked LSTMs to arrive at a 32-entry feature vector

-

“L1-distance” between feature vectors

Comment: Probably, the authors mean the difference vector.

- classification network: ‘similar’ vs ‘dissimilar’

Experiments

-

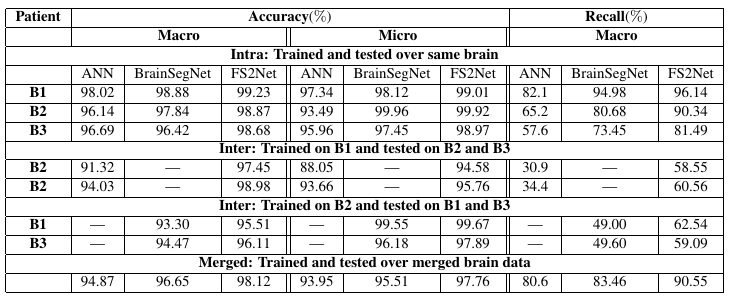

Comparison to two of their own works: ANN and BrainSegNet

- Comment: Doubtful if this can be considered as ‘state of the art’.

-

Training:

- BS: 11, 1000 batches per epoch -> only 11K training samples. # epochs: not clear.

Nomenclature

-

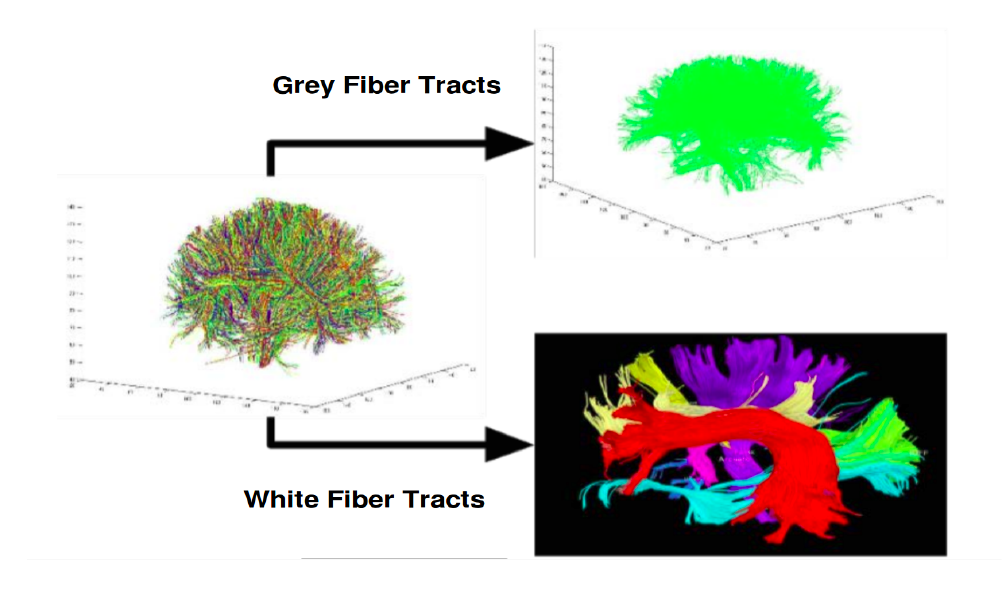

macro classification - Gray matter fibres vs White matter fibres

- probably: U-shaped or short fibres vs long range fibres (?)

-

micro classification - Labelling WM fibres into 8 subclasses

-

Recall - fraction of correct WM labels in macro classification

Data

- 3 subjects, 250K streamlines each

- preprocessing: keep only 75% of streamline points with the highest curvature (per fibre)

- each streamline: max of 100 points (zero padded)

Registered data

- FS2Net achieves top performance compared to ANN and BrainSegNet in all experiments.

- FS2Net shows better recall hinting at a better robustness towards data imbalance of GM and WM classes (macro).

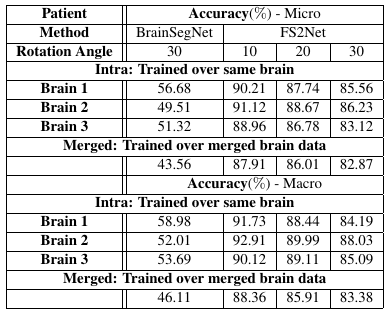

Unregistered data

- FS2Net is quite robust to rotations of testing streamlines.

Comment

- Very nice idea. However, for the real value of their approach for streamline segmentation the evaluation is of limited power.